How Colocation Rack Density Got Here — and Why It's a Problem Now

Colocation rack density has never been static, but the pace of change over the last five years has left a significant portion of the industry's existing infrastructure behind. Triton Thermal's advanced liquid cooling solutions are designed specifically for the operators navigating this gap — facilities built for one era of computing now being asked to serve a fundamentally different one.

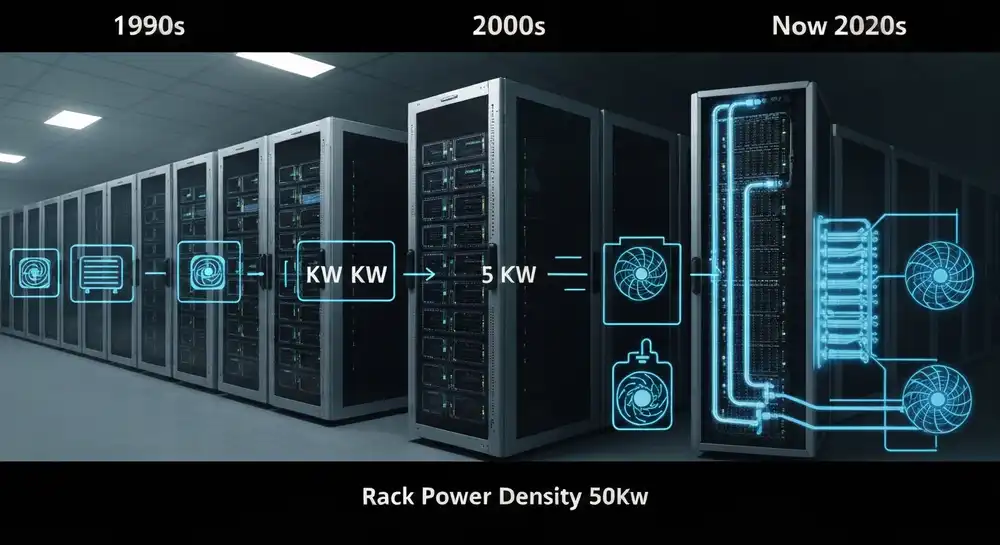

The numbers tell the story plainly. Average rack power densities have climbed from around 5kW per rack during the mid-2000s virtualization boom to 50kW and beyond for today's AI and HPC deployments. That's a ten-fold increase. The cooling infrastructure installed in colocation halls 15 to 20 years ago wasn't designed for anything close to those loads.

The Three Eras That Defined Colocation Rack Power

Understanding where the industry is today means looking at how it got here. Colocation rack density has moved through three distinct phases, each driven by a shift in what tenants were running.

The 1–5kW Era: Internet Infrastructure and Early Colo

The modern colocation market took shape during the late 1990s and early 2000s. Facilities were primarily built to house web servers, networking gear, and early enterprise workloads. Average densities ran between 1 and 3kW per rack. Air cooling was more than adequate. Raised-floor designs with CRAC units handled the load without much difficulty.

Most of the facilities built during this period — and there were a lot of them — are still operating today.

The 5–15kW Era: Virtualization and the Blade Server Shift

The mid-to-late 2000s brought virtualization, blade servers, and the first wave of cloud computing. Rack densities climbed into the 5 to 15kW range as operators consolidated workloads onto fewer, denser servers. The AFCOM 2025 State of the Data Center Report puts average density at 7kW per rack as recently as 2021. Air cooling was strained but manageable for most facilities.

This is the era when most of today's large-footprint colocation campuses were built out. Operators expanded aggressively across major metros, adding millions of square feet of capacity designed around the thermal assumptions of the time. Equinix and CoreSite, among others, built their retail colo footprints largely during this window.

The 15–50kW+ Era: AI Changes the Physics

The current era has broken the old assumptions entirely. AI training and inference workloads — driven by GPU clusters running on hardware like NVIDIA's H100 and H200 — routinely demand 30 to 50kW per rack. According to Schneider Electric, the latest NVIDIA-based GPU servers require 132kW of power when fully loaded into a rack, with next-generation hardware pushing that figure higher still.

That's not a problem any CRAC system was designed to solve.

What 50kW Racks Actually Demand From a Colocation Facility

Serving a 50kW rack isn't just a cooling challenge — it's a full infrastructure challenge. Power distribution, floor loading, cooling delivery, and heat rejection all have to be capable of handling the load simultaneously.

On the cooling side, the math is stark. Air cooling starts to fail above roughly 15 to 20kW per rack under normal data center conditions. Beyond that threshold, hot spots form, thermal throttling kicks in, and equipment starts running outside its rated parameters. Getting to 50kW reliably requires liquid cooling — whether that's rear door heat exchangers pulling heat off individual racks, direct-to-chip cold plates removing heat at the processor level, or a CDU-based loop serving a high-density zone.

The power distribution side is equally demanding. A single 50kW rack needs dedicated circuit capacity that most legacy colo floors don't have at the cabinet level. Schneider Electric's research found that standard rack PDU and circuit breaker configurations max out at 33 to 35kW per rack — well below what GPU deployments now require.

Why Legacy Retail Colo Halls Can't Just "Turn Up" the Density

This is the part that doesn't get said plainly enough. A colocation facility built for 5 to 10kW per rack can't reconfigure its existing infrastructure to serve 50kW tenants. The CRAC units cooling the floor weren't sized for it. The power circuits feeding the cabinets weren't run for it. The raised-floor plenum delivering cold air wasn't designed to move heat at that volume.

Some operators have responded by dedicating portions of new builds to high-density AI zones. That's a sound approach for greenfield projects. But most large-footprint colo operators aren't starting from a blank slate. They're managing existing campuses — often millions of square feet — where the density gap is a live problem today, not a future planning exercise.

The operators who can't answer when a prospective AI tenant asks "what's your maximum rack power?" are losing deals. That's not a theoretical risk. It's happening now, and it's getting more acute as AI hardware deployment accelerates.

Infrastructure Density Uplift: The Retrofit Path Forward

The alternative to new construction is retrofit — and done correctly, it's both faster and more economical than building new. Infrastructure Density Uplift (IDU) is the framework for extracting higher compute capacity from an existing facility footprint by replacing inefficient air cooling with targeted liquid cooling deployment.

The approach isn't about replacing an entire facility's cooling infrastructure at once. A well-executed IDU strategy identifies the highest-priority zones — typically the aisles or cages where AI and HPC tenant demand is concentrated — and deploys liquid cooling there first. That might mean rear door heat exchangers mounted to existing racks, a CDU-based direct-to-chip loop for a new high-density cage, or a hybrid configuration that lets the facility serve both legacy air-cooled tenants and new high-density deployments from the same floor.

The result is a facility that can compete for AI tenant business without the $500 million price tag of a new campus.

Triton Thermal's vendor-neutral approach is particularly well-suited to this kind of retrofit. Because the work isn't tied to a single manufacturer's product line, the cooling technology selected for each zone fits the facility's existing infrastructure — not whatever a single vendor happens to sell.