Your CRAC Units Aren't Just Getting Old. They're Holding You Back.

Here's a conversation happening in colocation sales offices across the country right now: a prospect calls asking for 40 kW per rack to run an AI training cluster, and the sales team has to explain that the facility tops out at 8 kW. The prospect thanks them politely and signs with someone else.

That's not a hypothetical. It's the reality for the majority of colocation operators in the United States. According to Uptime Institute's 2025 Global Survey, 75% of data centers still rely on perimeter air cooling. That's the same basic CRAC architecture that was state-of-the-art when most of these facilities were built 10 to 20 years ago.

The problem isn't just age, although age is certainly a factor. A CRAC unit installed in 2008 is running on borrowed time mechanically, sitting on a refrigerant that's being phased out of existence, burning electricity at rates that would make a modern engineer wince, and is physically incapable of removing enough heat to support the workloads that today's tenants actually need to run.

This guide breaks down exactly when replacement makes sense, what your options look like, and why the decision you make about your cooling infrastructure in the next 12 to 24 months will determine whether your facility stays competitive or gets left behind.

How Long Do CRAC Units Actually Last?

The textbook answer is 10 to 15 years, with 12 years cited most often as the industry standard replacement cycle. ASHRAE's service life data supports this range, though ASHRAE itself notes that the underlying research dates back to a 1978 survey of just 68 participants.

In practice, plenty of CRAC units run well past their expiration date. Stulz cooling engineers have documented units from the 1980s still humming along in facilities across Asia. Vertiv's DS series, their first major CRAC redesign in 25 years, still shared components with units originally designed in 1983.

But "still running" and "running well" are two very different things. Here's what actually breaks down and when.

Compressors are the first critical failure point. About 30% of compressor failures trace back to installation errors, but even properly installed units see degradation after 8 to 10 years. When a compressor fails, temperatures climb fast. A single repair runs $1,500 to $3,000 or more.

Fans and bearings come next. Belt-driven fans need quarterly tension adjustments and annual belt replacement. This is one area where a targeted retrofit can make a difference. Modern EC (electronically commutated) motors eliminate belts and cut fan energy by 30% or more.

Controls and electronics often become obsolete before they physically fail. Liebert CRAC units alone went through three control generations between 1996 and 2009. If your units still run on legacy microprocessor controls, finding someone who can service them gets harder every year.

The failure rate curve is pretty straightforward. Low risk through year five, then moderate risk from five to ten as compressor and bearing issues start showing up. From ten to fifteen, maintenance costs begin to approach the cost of replacement. Past fifteen, you're rolling the dice every month.

Here's what the financial benchmarks actually say. When annual maintenance costs cross the 3 to 5% threshold of what a new unit would cost, and the equipment is past the ten-year mark, continued repair no longer pencils out. Most operators hit that crossover around years 10 to 12. If a major compressor replacement is on the horizon, you'll get there faster.

The Refrigerant Problem Nobody Wants to Talk About

If equipment age were the only issue, you could make a reasonable argument for riding out your existing CRAC fleet a few more years. But refrigerant regulations have changed that math completely, and the timeline is tighter than most operators realize.

R-22 Is Already Gone

R-22 production and import ended on January 1, 2020. Full stop. The only R-22 available today is reclaimed, recycled, or pulled from old stockpiles, and the pricing reflects that scarcity.

What used to cost a few dollars per pound now runs $90 to $250+ per pound installed, with some supply houses quoting north of $400 per pound. That's a 1,000% increase from historical norms.

The EPA has been clear: there are no drop-in replacements for R-22. Any unit installed before 2010 could be running R-22, and if yours is, you're operating equipment that is already economically unsustainable to service. Global elimination of all HCFCs is locked in for 2030 under the Montreal Protocol.

R-410A Is Next

This is where it gets urgent for operators who thought they had time.

The AIM Act (American Innovation and Manufacturing Act, passed December 2020) sets a phasedown schedule for HFCs, including R-410A. From 2024 to 2028, supply drops 40% from baseline. Starting 2029, it drops to just 30% — the supply cliff. Starting January 1, 2027, new data center cooling equipment must use refrigerants with a Global Warming Potential below 700. R-410A carries a GWP of 2,088.

The replacement refrigerants — R-454B (Opteon XL41) and R-32 — are not drop-in retrofits. You cannot convert an R-410A CRAC unit to run on R-454B. Full equipment replacement is the only option.

EPA enforcement isn't theoretical either. Civil penalties run $44,539 to $69,733 per day per violation. Trident Seafoods was hit with a $900,000 fine plus $23 million in required upgrades. Starting January 2027, automatic leak detection is required for new systems with 1,500+ pounds of refrigerant.

What This Means for Your Replacement Timeline

If you're operating R-22 units, replacement should have happened yesterday. If you're operating R-410A units installed before 2015, you have a narrowing window. The smart move is to plan your replacement strategy now, while you can execute on your timeline rather than in an emergency. Waiting until 2028 or 2029 means competing with every other operator who also stayed, and paying premium prices for equipment and labor during peak demand.

The Energy Cost You're Paying Every Month and Ignoring

Cooling accounts for roughly 40% of a data center's total energy consumption. In facilities running legacy CRAC units, that number can climb well above 50%. The compressors alone, which do the heavy lifting in any DX CRAC system, consume up to 70% of total cooling energy.

A typical legacy CRAC unit operates with a Coefficient of Performance (COP) between 1.5 and 2.5. The federal minimum under ASHRAE 90.1-2019 is a Net Sensible COP of 2.2 for floor-mounted units. Aging equipment with refrigerant leaks, worn compressors, corroded coils, and clogged filters can operate at 30 to 50% below original rated efficiency. Compare that to a modern chiller-based system serving CRAH units, which delivers a system-level COP of 4.5 to 9.75. That's a 2x to 4x efficiency gain.

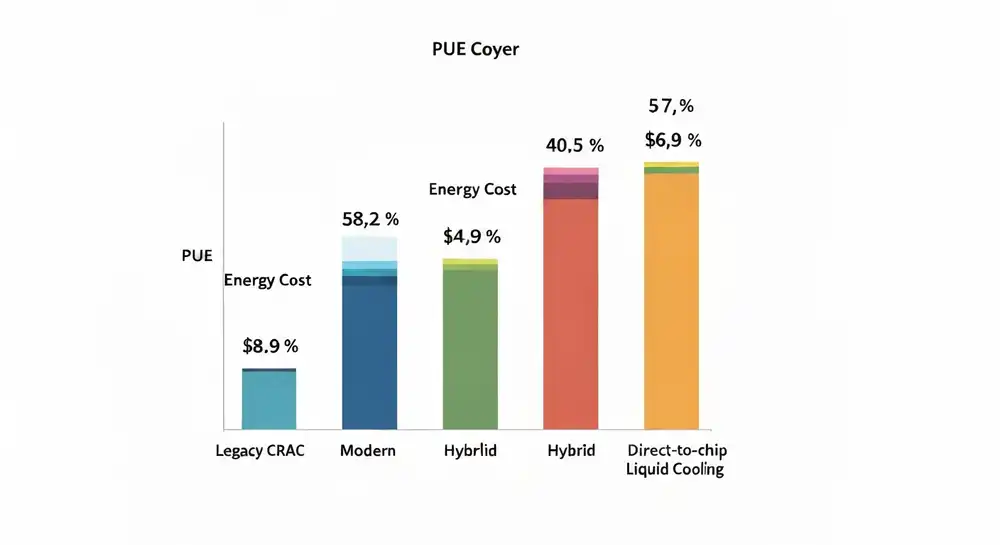

The PUE numbers tell the story clearly:

| Cooling Approach | Typical PUE |

|---|---|

| Legacy CRAC (10+ years) | 1.7–2.0+ |

| Modern CRAH with chilled water | 1.4–1.6 |

| Hybrid air + liquid cooling | 1.3–1.4 |

| Direct-to-chip liquid cooling | 1.1–1.2 |

Uptime Institute's 2025 data shows the industry-average PUE stuck at 1.54 for six consecutive years, held down by the massive installed base of legacy cooling systems. If your facility is above that average, you're paying more per kilowatt of IT load than the majority of your competition.

Take a 1 MW facility running at PUE 1.7 versus 1.3. At $0.10 per kWh, that 0.4 PUE gap costs roughly $350,000 per year in wasted energy. Scale that to a 20 MW campus, and you're looking at $35 million annually.

Vertiv modeled a specific scenario: replacing 17 aging CRAC units with 10 Liebert DSE free-cooling units in a 1 MW facility in Columbus, Ohio. The result was a PUE drop from 1.53 to 1.16, cooling costs falling from $462,000 to $128,000 per year, and a full payback in 3.8 years — including a $431,000 energy rebate. That's $334,000 per year, every year, flowing straight to the bottom line.

The Downtime Risk That Keeps Getting More Expensive

Data center downtime now costs an average of $14,056 per minute. For large enterprises, that number jumps to $23,750 per minute. Over 90% of midsize and large enterprises report hourly downtime costs exceeding $300,000.

Cooling-related failures account for 13 to 19% of all data center outages, according to Uptime Institute's 2024 data. In July 2022, a UK heatwave caused multiple redundant cooling systems at Google Cloud's London facility to fail simultaneously. A year later, a cooling failure at Equinix Singapore disrupted DBS Bank and Citibank, blocking roughly 2.5 million payment and ATM transactions in a single event.

The physics of cooling failure leave almost no margin. In a documented Uptime Institute case, a 250-rack computer room running 6 kW per rack went from 72°F to over 90°F in just 75 seconds when cooling was lost. Without backup cooling, server rooms become unacceptably hot within five minutes.

For colocation operators, the financial exposure goes well beyond the outage itself. Typical SLA penalties range from 15% of monthly base rent for delayed notification to 200 to 500% for falling short of uptime guarantees. Many contracts give tenants the right to terminate entirely after repeated events.

The Density Gap: Why CRAC Units Can't Serve the AI Era

CRAC units were designed for a world where data centers operated at 3 to 8 kW per rack. Then AI happened.

| GPU Generation | Year | Rack Power Demand |

|---|---|---|

| NVIDIA A100 | 2022 | ~25 kW per rack |

| NVIDIA H100 | 2023 | ~40 kW per rack |

| NVIDIA GB200 NVL72 | 2025 | 120–142 kW per rack |

| NVIDIA Kyber NVL576 | 2027 | ~600 kW per rack |

A single NVIDIA DGX H100 system draws up to 10.2 kW — more than many colocation facilities allocate to an entire rack. The GB200 NVL72 demands 120+ kW and requires liquid cooling by design. Water's heat transfer coefficient is 3,500 times greater than air's, and at 50 kW per rack, air cooling would require nearly 8,000 CFM of airflow per rack. That's a physical impossibility in a standard raised-floor environment.

Today, fewer than 5% of data centers worldwide can support even 50 kW per rack. Meanwhile, 53% of data center operators say AI workloads will "definitely" increase their capacity requirements. This is the Infrastructure Density Uplift challenge: the gap between what your facility was built to deliver and what the market now demands. Closing that gap starts with cooling.

Goldman Sachs projects that NVIDIA's Kyber-class systems, targeting 600 kW per rack by 2027, will mark the end of the retrofitting era altogether. The window to act is now.

Your Three Paths Forward: Replace, Upgrade, or Transition

When a CRAC unit reaches the end of its life, operators face three distinct paths.

Path 1: Replace CRAC with New CRAC

The like-for-like swap. Cost: $15,000 to $50,000 per unit. It addresses refrigerant compliance and improves efficiency 20 to 30% over legacy units — but you're still limited to air cooling with a maximum density around 15 to 25 kW per rack.

Path 2: Upgrade to CRAH with Central Plant

Converting from distributed DX CRAC units to a centralized chilled water plant with CRAH air handlers is the step-change upgrade. CRAH units use chilled water instead of onboard compressors and integrate with variable-speed EC fans and waterside economizers. Cost: $25,000 to $75,000 per CRAH unit, plus chiller plant infrastructure. PUE drops from the 1.7 to 2.0 range to 1.4 to 1.5. A Compu Dynamics case study documented PUE dropping from 1.8 to 1.3 after a full CRAC-to-CRAH conversion.

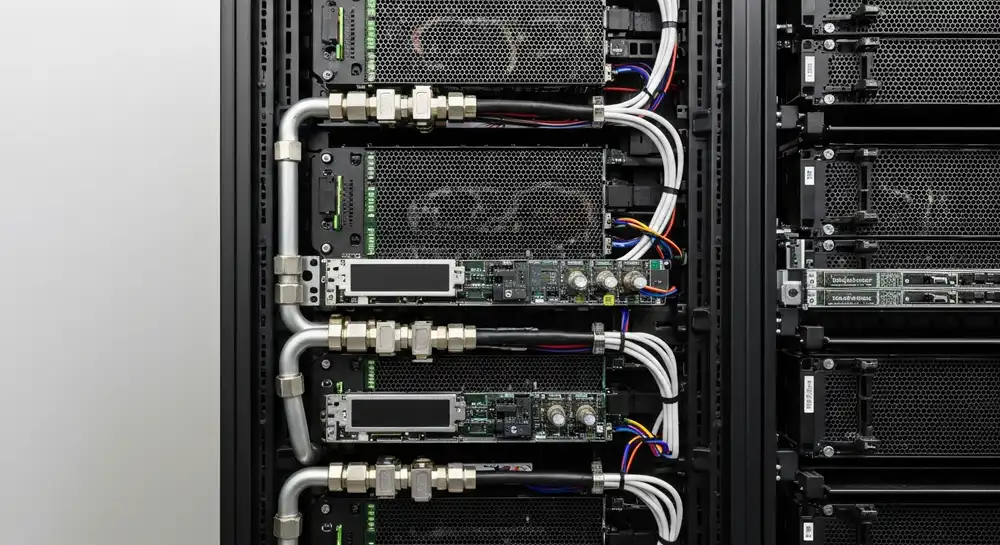

Path 3: Transition to Liquid or Hybrid Cooling

This is the future-proof path. Rather than replacing air cooling with better air cooling, you shift the architecture to support liquid-cooling technologies — direct-to-chip cold plates, rear-door heat exchangers (RDHx), and immersion cooling — either facility-wide or in targeted high-density zones. Cost: $50,000 to $200,000 per rack for full liquid cooling. Supports 30 kW to 200+ kW per rack. PUE approaches 1.03 to 1.2. Opens your facility to AI, HPC, and GPU tenants actively looking for high-density capacity.

The hybrid approach is what most operators are actually deploying: CRAH or optimized air handling for standard enterprise workloads in the 5 to 15 kW range, RDHx for moderate-density racks at 20 to 70 kW, and direct-to-chip liquid cooling for the highest-density GPU deployments. Equinix is rolling out DLC across 100+ data centers. Digital Realty supports 30 to 150+ kW per rack through their Air-Assisted Liquid Cooling program. The industry direction is clear.

How to Execute a CRAC Replacement Without Downtime

Replacing cooling equipment in a live colocation environment takes careful planning, but it's done all the time. STI documented a full CRAC replacement in a live data center within 10 working days.

Step 1: Assess Your Actual Load. Most facilities have far more cooling capacity than they're using. Uptime Institute found that the average data center runs 3.9 times overcapacity on cooling. Measure actual thermal load versus installed capacity first.

Step 2: Verify N+1 Redundancy. Before touching anything, confirm that removing one CRAC unit from service still leaves enough capacity to handle the full IT load with margin.

Step 3: Fix Airflow First. This step costs almost nothing and pays for itself immediately. Uptime Institute reports that 60% of cooling air in typical data centers is wasted through bypass. Seal cable cutouts, install blanking panels, and add containment before replacing a single unit. One Pacific telco provider turned off 13 CRAC units after optimizing airflow and saved 37% of cooling energy in year one.

Step 4: Sequential Replacement. Take one unit offline at a time. Deploy continuous temperature monitoring at rack inlets throughout the process and stay within ASHRAE's recommended 18 to 27°C (64 to 81°F) envelope.

Step 5: Structured Commissioning. DOE data shows that structured commissioning improves system performance by 10 to 20% over standard installation.

Making the Business Case: Real Numbers from Real Projects

The pattern holds across every project documented:

QTS Sacramento retrofitted aging Liebert units with EC motors and modern controls: 75% reduction in cooling unit energy, $144,000 per year in savings, and a $150,000 utility rebate.

A healthcare consortium in Silver Spring, Maryland, upgraded 63 aging cooling units: 3+ million kWh saved annually, $360,000 per year in energy costs cut, roughly $700,000 in rebates, payback under three years.

Three federal data centers assessed by DOE/LBNL showed combined annual savings of $700,000 with an average payback of about two years. At one facility, they simply turned off 35% of the CRAH units with zero operational impact.

A 15-year-old East Coast colocation facility implemented containment, improved filtration, and raised setpoints. PUE dropped from 2.0 to 1.7, saving $1.5 million per year — no equipment replacement required for that initial phase.

Payback periods across all documented projects: 1.9 to 3.8 years, with savings that continue accumulating year after year.

The Decision Framework: When to Replace, When to Wait

Replace immediately if:

- Your units use R-22 refrigerant

- Annual maintenance costs exceed 5% of the replacement cost

- You've had two or more unplanned cooling events in the past 12 months

- Compressor failure has occurred or been flagged as likely

- You're losing tenant prospects due to density limitations

Plan replacement within 12 months if:

- Units are 12+ years old and use R-410A

- PUE is above 1.7 and you're paying a premium for energy

- Tenant demand is shifting toward 15+ kW per rack

- Spare parts are becoming difficult to source

Monitor and optimize if:

- Units are under 10 years old, use R-410A, and run well

- Maintenance costs are below 3% of replacement value

- Current density demand stays under 10 kW per rack

- Airflow optimization and containment haven't been fully implemented yet

Even in the "monitor" category, start planning now. The 2029 R-410A supply cliff is three years away, and equipment lead times are stretching as demand surges across the industry. Vertiv's current backlog sits at $9.5 billion with record order growth.